Insider Brief

- MIT researchers created a speech-to-reality system that uses natural language, generative AI, and robotics to fabricate physical objects within minutes from spoken prompts.

- The system interprets a request, generates a 3D design, converts it into modular components, and directs a robotic arm to assemble items such as stools and shelves without requiring user expertise.

- The team is advancing sturdier connectors, scalable robotic pipelines, and gesture-based controls as they position the work as an early step toward accessible, on-demand manufacturing.

MIT researchers have devloped a system that allows users to speak a request aloud and receive a fabricated object minutes later, demonstrating how natural language, generative AI, and robotics can combine to produce on-demand manufacturing.

According to MIT, the work, presented at the ACM Symposium on Computational Fabrication, shows that the system can assemble simple furniture and decorative items from modular parts without requiring users to know 3D modeling or robotic programming.

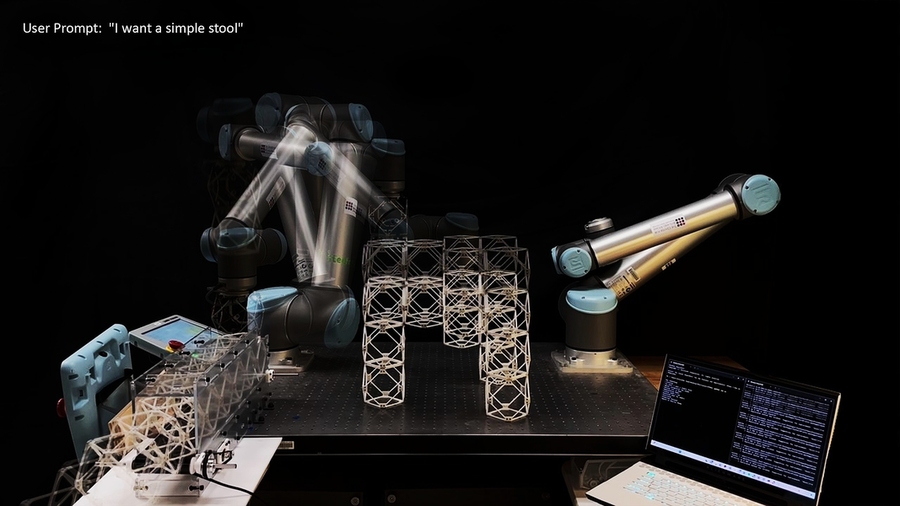

Researchers at MIT’s Center for Bits and Atoms, led by graduate student Alexander Htet Kyaw with collaborators Se Hwan Jeon and Miana Smith, built a workflow that begins with speech recognition and a large language model. The model interprets the user’s request — such as asking for a stool — and passes the result to a 3D generative AI system that produces a digital representation of the object. A voxel-based process then breaks that form into discrete components suitable for robotic assembly. After geometric checks for constraints like overhangs, part count, and structural connectivity, the system generates an assembly sequence and robotic path plan to build the finished object.

“These are rapidly advancing areas of research that haven’t been brought together before in a way that you can actually make physical objects just from a simple speech prompt,” Kyaw said.

The researchers report that the system is able to turn spoken prompts into items such as stools, shelves, chairs, and small tables within roughly five minutes, far faster than typical 3D printing workflows. They argue that this approach could open fabrication to people who lack technical experience, since natural language becomes the main design interface. The researchers note that modular components also make objects reversible: assemblies can be taken apart and reused, reducing material waste and allowing objects to be repurposed — for example, reassembling pieces from a sofa into a bed.

MIT indicated the team is now investigating ways to strengthen the assembled furniture by replacing magnetic connectors with sturdier joints. They are also developing pipelines that allow mobile robots to follow the same voxel-to-assembly logic at different scales. Future versions of the system may incorporate gesture inputs and augmented-reality guidance so that speech and movement work together as control signals for fabrication.

The researchers said the work illustrates how rapid advances in AI-driven design, natural-language interfaces, and robotic construction can reshape everyday manufacturing. They view the system as an early step toward on-demand physical creation, in which objects can be generated as easily as requesting them.

“This project is an interface between humans, AI, and robots to co-create the world around us,” Kyaw added. “Imagine a scenario where you say ‘I want a chair,’ and within five minutes a physical chair materializes in front of you.”

image credit: Alexander Kyaw and the researchers.